AI, Fraud 8 min read

AI agents in fraud prevention and investigation: A responsible deployment guide

Fraud teams are under growing pressure to keep pace with threats that are becoming more sophisticated, coordinated, and difficult to detect with traditional controls.

Part of the challenge is operational. Fraud signals often sit across separate systems: identity verification tools, device intelligence, network data, transaction monitoring platforms, and case management workflows. Each system may surface a useful signal, but when those signals remain fragmented, analysts are left to piece together the full picture manually.

That makes investigation harder than it needs to be. An alert may show that something looks unusual, but the team still needs to understand why it was flagged, how it fits the customer’s history, and whether it points to genuine fraud or a false positive.

This is where agentic AI can start to help. In Taktile’s guide to responsibly deploying AI agents in financial institutions, we describe three priorities for an agentic fraud system:

1. Contextual investigation

2. Cross-signal synthesis

3. Adaptive learning.

For fraud prevention and investigation teams, that means using agents to gather context, reason across disconnected tools, and learn from analyst feedback over time. The opportunity is not just to review alerts faster, but to give teams a clearer, more connected view of risk so they can focus their expertise on the cases that matter most.

Why fraud is well-suited to agentic AI

Fraud decisions often require more than a single rule or score.

A transaction may look unusual in isolation but reasonable in the context of a customer’s history. A document mismatch may be a harmless formatting issue or an early signal of synthetic identity fraud. Or a chargeback may be legitimate, opportunistic, or part of a broader pattern.

Agents can help because they can gather context, compare signals, reason across multiple sources, and decide whether a case should be auto-resolved, monitored, or escalated to a human reviewer.

The value is not just speed. It is better prioritization: helping analysts spend less time on obvious false positives and more time on cases where deeper investigation is needed.

Use case 1: Application consistency checks

Fraud teams often need to compare customer-submitted information across multiple verification tools. Each tool may provide a useful signal, but the harder question is whether the full customer profile makes sense.

An application consistency check agent can help by reviewing documents, application data, and third-party sources together. For example, if a utility statement shows a different address than a driver’s license, the agent can assess whether that mismatch looks like a harmless formatting issue, a simple customer error, or something that needs deeper review.

From there, the agent can classify the level of concern and escalate only the cases where something meaningful does not align. Over time, analyst feedback can help the agent learn which patterns tend to signal fraud and which are more likely to be genuine mistakes.

For fraud, product, and operations teams, this can reduce unnecessary customer friction while still preserving control. Not every inconsistency needs the same response, and agents can help teams apply that nuance more consistently.

Use case 2: Transaction fraud detection

Transaction fraud detection is often where fragmented signals become most difficult to manage. An alert may indicate that something looks unusual, but it rarely gives analysts the full context they need to understand whether the activity is genuinely suspicious.

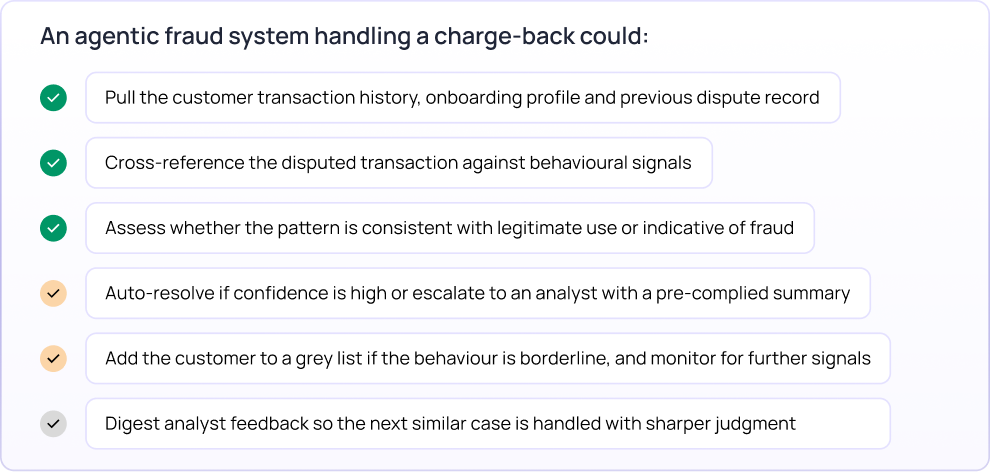

An agent can help by starting the investigation before the case reaches a human reviewer. When a flag is raised, the agent can pull together relevant context such as transaction history, onboarding information, prior disputes, behavioral signals, and other risk indicators. It can then assess the alert against the broader customer profile, rather than treating the transaction in isolation.

This added context helps teams apply more nuance. For example, two chargebacks may require very different responses depending on the customer’s history, recent behavior, and previous dispute record. In a lower-risk case, the agent may recommend resolution. In a more ambiguous case, it may escalate to an analyst with the key evidence already organized.

For fraud teams, this can make investigations faster and more focused without removing human judgment from the process. Analysts receive a clearer starting point, reviewers can see why a case was escalated, and feedback from each decision can help the agent improve over time.

What responsible deployment looks like

Responsible deployment starts with recognizing how immediate the impact of each decision can be. A false positive can block a legitimate customer. A false negative can allow financial loss. And a poorly explained decision can create operational, customer experience, and compliance challenges.

That is why fraud agents should be embedded into a governed workflow, with clear controls around what they can decide, what they should monitor, and when they need to escalate.

A responsible agentic fraud system should include:

Unified context: Agents need access to the signals required to assess risk in context, including onboarding data, transaction history, device and network signals, identity verification outputs, prior disputes, and case history.

Clear decision boundaries: Fixed thresholds and hard policy requirements should continue to be governed by rules. Agents are most useful where the work requires investigation, comparison, and synthesis across multiple signals.

Risk-based escalation: Not every mismatch or alert should be treated the same way. Low-risk cases may be resolved automatically, borderline cases may be monitored, and higher-risk or uncertain cases should be escalated for human review.

Human review: Analysts need a single view of the customer, alert, evidence, agent reasoning, and recommended next action so they can understand the case quickly and make an informed decision.

Feedback loop: Analyst approvals, modifications, and overrides should feed back into the system, helping the agent improve as fraud patterns and customer behavior evolve.

Audit and monitoring: Teams need explainable audit trails, performance tracking, and visibility into whether agent accuracy changes over time.

Together, these layers help fraud teams move from isolated AI assistance toward agentic workflows that are controlled, explainable, and built to adapt.

How to get started

A practical first fraud agent deployment usually starts with a specific investigation bottleneck.

For some teams, that may be application consistency checks. For others, it could be transaction fraud alert triage, chargeback dispute investigation, grey-list monitoring, case summary generation, or analyst feedback capture.

The best starting points have clear success metrics, defined escalation thresholds, and a reliable way to compare agent performance against the current process. This makes it easier to understand whether the agent is reducing manual effort, improving prioritization, and helping analysts focus on the cases that matter most.

Useful metrics to track include:

- Alert resolution time

- False positive reduction

- Percentage of cases auto-resolved

- Escalation precision

- Analyst override rate

- Customer friction or interruption rate

- Fraud loss impact

- Time to incorporate new fraud patterns into the workflow

The goal is not simply to automate fraud review. It is to build a system that can reason across fragmented signals, escalate the right cases, and improve as fraud patterns evolve.

If your team is exploring how AI agents could strengthen fraud prevention without adding unnecessary customer friction, download the full guide for a practical framework to identify use cases, design guardrails, and deploy responsibly across financial services workflows.

Frequently Asked Questions (FAQs)

How can AI agents help fraud teams?

AI agents can help fraud teams investigate alerts, synthesize signals across systems, classify risk, resolve low-risk cases, escalate ambiguous cases, and generate case summaries for analysts.

What is cross-signal synthesis in fraud prevention?

Cross-signal synthesis means reasoning across outputs from multiple tools — such as identity verification, device intelligence, transaction monitoring, onboarding data, and prior disputes — to understand whether the full customer picture suggests fraud.

Can AI agents reduce false positives in fraud detection?

Agents can reduce false positive burden by adding context before escalation, auto-resolving low-risk cases where appropriate, and routing only ambiguous or higher-risk cases to analysts.

What guardrails are needed for fraud agents?

Fraud agents need clear data access controls, escalation thresholds, human review workflows, audit trails, performance monitoring, and feedback loops to improve over time.