AI, AML, Compliance 8 min read

AI agents in compliance and AML: A responsible deployment guide

For AML and compliance teams, the challenge is rarely a lack of effort. It is the growing pressure to move quickly, reduce customer friction, and catch real financial crime, all while maintaining the documentation, consistency, and oversight that regulated work requires.

That pressure shows up across the entire customer lifecycle. During onboarding, analysts may spend hours reviewing documents, checking ownership structures, running sanctions and watchlist screening, and assessing adverse media. Once customers are active, transaction monitoring systems can flood teams with false positives. And when cases escalate, investigators often need to piece together context from multiple systems before they can decide what action to take.

This is where agentic AI can start to help. AI agents are well suited to workflows that require investigation, synthesis, and careful routing: gathering information, connecting signals across systems, preparing case summaries, and surfacing higher-risk or uncertain cases for human review.

In Taktile’s guide to responsibly deploying AI agents in financial institutions, we explore how agents can support some of the most manual and judgment-intensive workflows in financial services, including AML and compliance.

For these teams, the opportunity is not just to process more alerts or complete checks faster. It is to create a more focused, context-rich way of working: one where agents help prepare the investigation, analysts stay in control of critical decisions, and every outcome can be reviewed, documented, and improved over time.

What AI agents change in AML and compliance

Traditional rules engines are useful for deterministic checks. They can flag transactions above a threshold or enforce fixed policy requirements. But they are less suited to the parts of AML and compliance work that require interpretation, investigation, and context.

Agents are different because they can work across unstructured information, connect data from multiple sources, follow investigative steps, and identify when a case needs human review. In practice, this can help teams move from raw alerts and manual research to pre-compiled case summaries, clearer risk signals, and more focused review.

The goal is not to replace compliance judgment. It is to reduce the investigative legwork that prevents analysts from spending enough time on the cases that genuinely require their expertise.

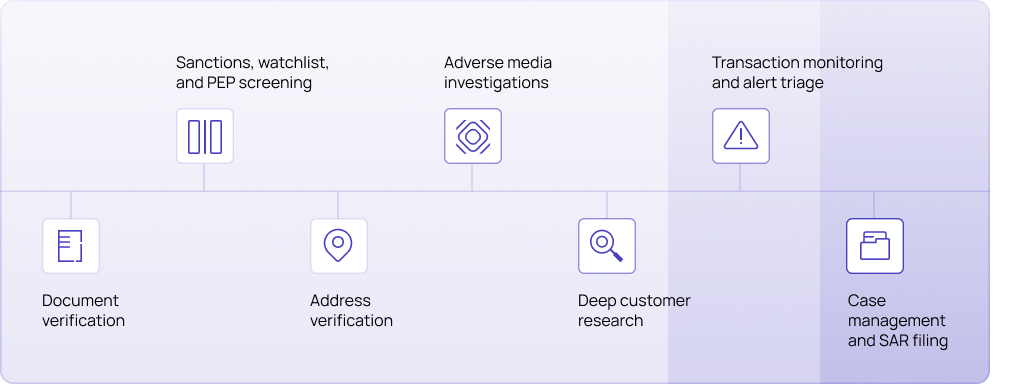

To make this more practical, it helps to look at the AML and compliance journey in three connected layers: customer onboarding, ongoing transaction monitoring, and case management/SAR filing. Each layer creates a different kind of operational burden, and each presents a different opportunity for agents to support the people behind the process.

Layer 1: Customer onboarding

During onboarding, compliance decisions often come down to checking customer-provided information against internal policies and external data sources. This can include business ownership information, source of funds, sanctions and watchlist data, address verification, and adverse media results.

Use case: Document verification

Document verification is a strong starting point because agents can do more than extract text. They can understand the meaning of information, compare it against other sources, and identify gaps.

Without agents, analysts may use OCR to pull data from documents, then manually compare that data against registry entries or verification sources. OCR can extract text, but it cannot always interpret whether the information is complete, inconsistent, or meaningful.

With agents, much of this preparatory work can be reduced. An agent can read customer documents even when the format is non-standard, cross-reference extracted information against registries and identity verification sources, identify inconsistencies, and summarize findings for the analyst.

For customers, this can mean fewer delays and clearer requests for missing information. For analysts, it means less time compiling data and more time reviewing the substance of the case.

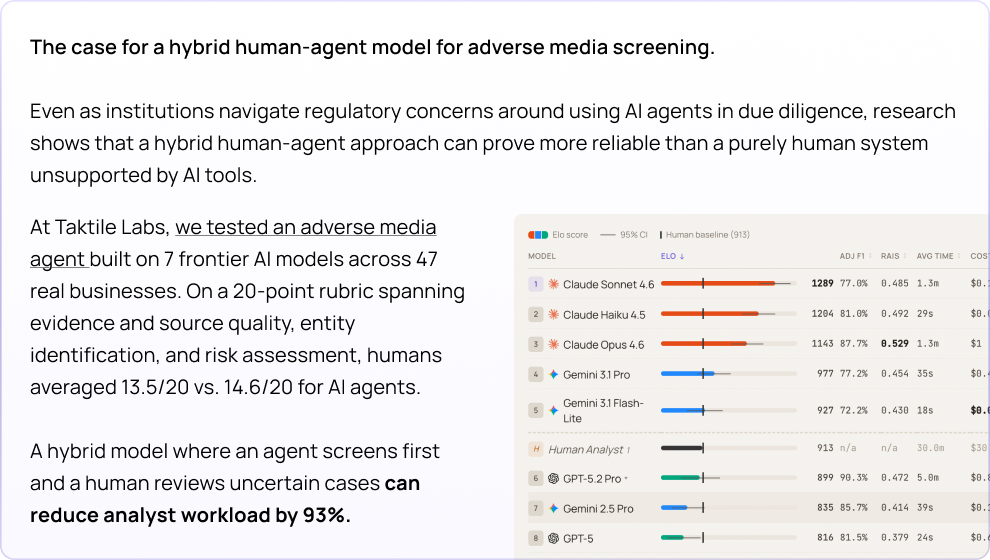

Use case: Adverse media screening

Adverse media is another area where agents can meaningfully reduce manual burden.

Many institutions already automate the initial search process. The harder work is evaluating whether the result is relevant, linking it to the correct entity, assessing risk level, documenting findings, and deciding the next step.

Agents can gather and read articles, connect results to the correct customer, filter out low-risk alerts, and summarize genuine signals for analyst review. As customer behavior changes, agents can also monitor for new adverse media signals and trigger review when concerns arise.

However, the guide also highlights an important watch-out: sanctions and PEP screening errors can carry immediate regulatory consequences. For this reason, agent deployments in these areas should use lower thresholds for human intervention and mandatory senior-level review of documentation.

This is a useful principle for responsible AML deployment more broadly: the higher the regulatory consequence, the more conservative the automation threshold should be.

Layer 2: Transaction monitoring

Once customers are onboarded, AML teams face the ongoing challenge of monitoring activity for suspicious behavior.

Traditionally, transaction monitoring systems have created a difficult tradeoff: cast a wide net and overwhelm analysts with false positives, or tighten thresholds and risk missing genuine threats. Rules-based systems can flag suspicious patterns, but they often lack the context needed to distinguish a low-risk anomaly from a meaningful risk signal.

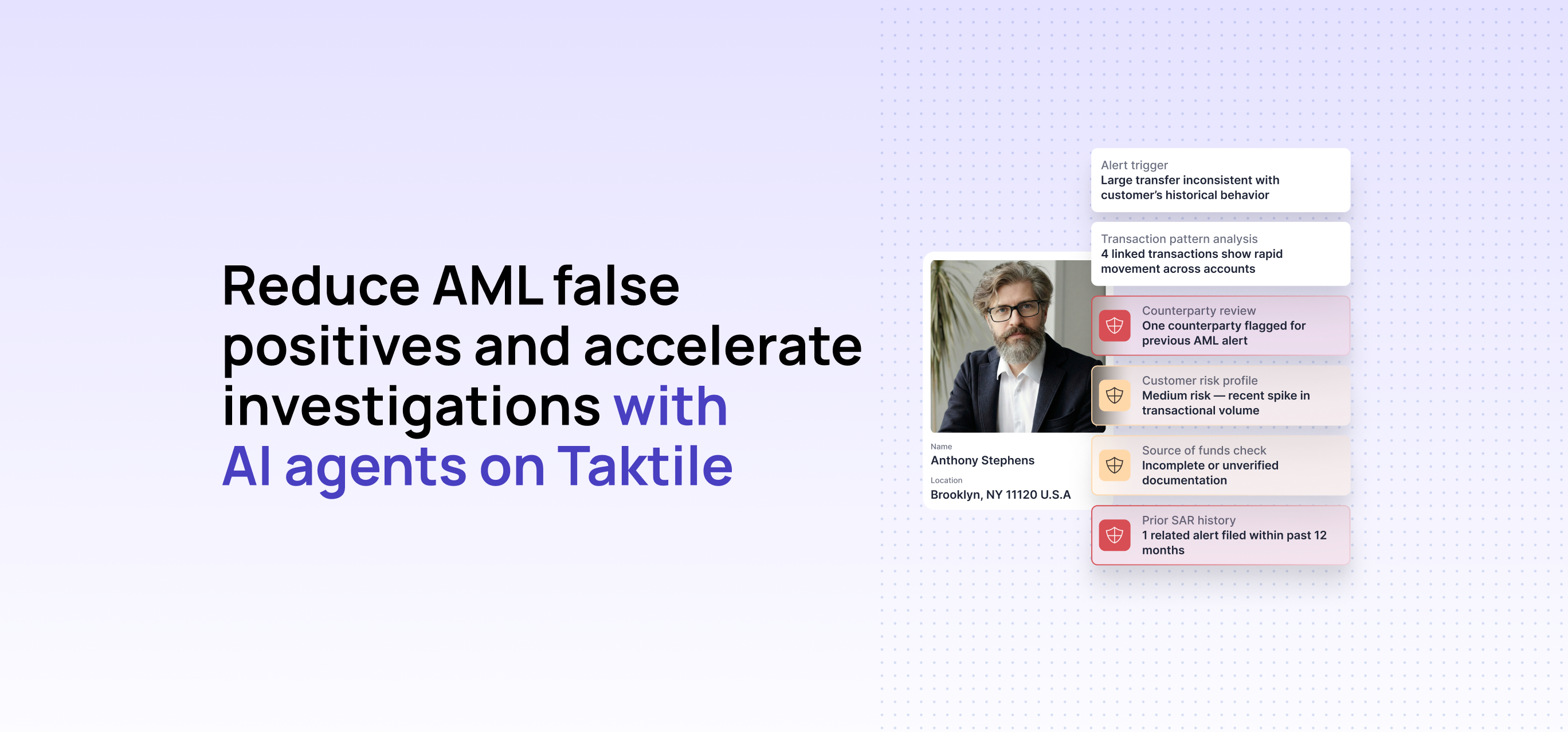

Agent use case: Intelligent alert triage

This is where intelligent alert triage can help. Instead of sending every triggered alert directly into an analyst’s queue, an agent can begin the investigation first.

When an alert fires, the agent can compile relevant context such as transaction history, customer background, behavioral patterns, prior alerts, and risk indicators. It can then assess the alert in light of that broader picture: resolving low-risk cases where appropriate, classifying the level of concern, and surfacing higher-risk or uncertain cases to a human reviewer with a pre-compiled summary.

For analysts, this changes the starting point. Rather than beginning with a raw alert and manually gathering context, they receive a more complete case view with the relevant evidence already organized. Their approvals, modifications, and overrides can then feed back into the system, helping the agent refine its judgment over time.

For mature institutions, the value is not simply detecting more alerts. It is helping teams prioritize the alerts most likely to represent real risk, reduce time spent on false positives, and focus human expertise where it can have the greatest impact.

Layer 3: Case management and SAR filing

The value of an agentic AML system becomes especially clear once a case moves into deeper investigation and documentation.

At this stage, analysts are often working across multiple systems to understand what happened, why it matters, and whether the activity needs to be reported. They may need to review customer history, transaction patterns, prior alerts, risk scores, and supporting evidence before they can begin drafting a clear narrative.

Agent use case: SAR narrative generation

SAR narrative generation is a useful example of where agents can support the process without removing human control.

When a case requires a Suspicious Activity Report, an agent can help prepare the first draft by organizing the relevant facts, highlighting key risk indicators, and structuring the findings in the required format. Instead of starting from a blank page, reviewers can begin with a drafted narrative connected to the underlying customer information, transaction history, and evidence.

The analyst or reviewer remains responsible for the final assessment, refinement, and submission. But with the context already assembled and the narrative already framed, they can spend more time evaluating the substance of the case and less time pulling information together manually.

For AML teams, this can help reduce documentation burden, improve consistency across case files, and make it easier for reviewers to understand how each conclusion was reached.

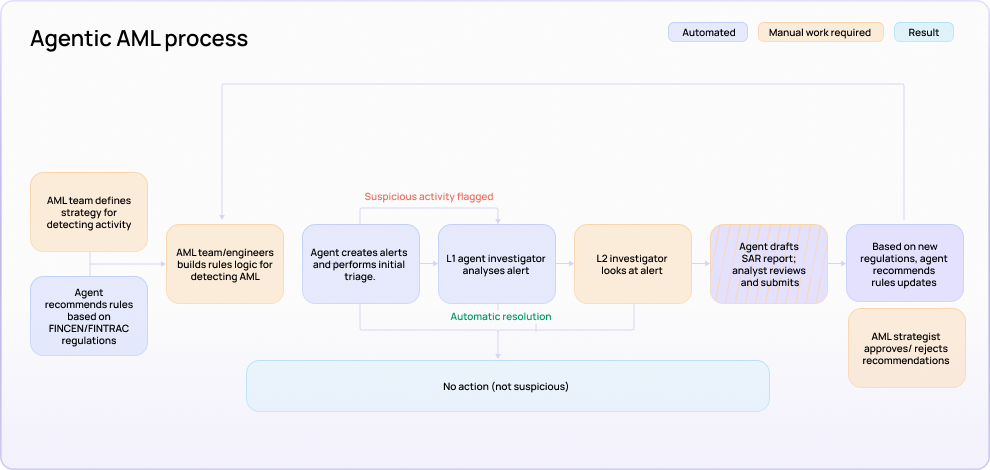

What responsible deployment looks like

For AML and compliance teams, responsible deployment starts with a simple principle: agents should not sit outside the workflow as isolated chatbots or research assistants. They should be embedded into a governed process where their actions are contextual, controlled, reviewable, and auditable.

That means designing around four core layers:

Context: Agents need access to the information required to reason reliably, including customer data, third-party screening results, transaction history, prior alerts, and relevant policy context.

Controls: Fixed requirements, thresholds, and non-negotiable policy constraints should continue to be governed by rules. Agents are most useful where the work requires investigation, synthesis, and judgment-support.

Human review: Higher-risk cases, uncertain results, sanctions or PEP concerns, and reportable activity should be routed to qualified reviewers with the evidence, reasoning, and recommended next step clearly visible.

Monitoring: Every agent action should be auditable, including which data sources were used, which tools were called, what recommendation was made, and whether a human approved, modified, or overrode it.

Together, these layers help AML teams move from isolated AI assistance toward agentic workflows that are reliable enough to support regulated operations.

How to get started

A practical first AML deployment usually begins with a workflow where the pain is specific, measurable, and safe to test.

For some teams, that might be document verification during onboarding. For others, it could be adverse media screening, alert triage for a defined transaction monitoring scenario, case summary preparation, or SAR narrative drafting for human review.

The best starting points have clear boundaries, a known human baseline, and a defined escalation policy. This makes it easier to evaluate whether the agent is reducing manual work, improving consistency, and helping analysts focus on the cases that need deeper review.

Useful metrics to track include:

- Analyst hours per case

- False positive reduction

- Alert resolution time

- Percentage of cases auto-resolved

- Escalation accuracy

- Human override rate

- SAR drafting time

- Documentation completeness

Successful AML agent deployments are not necessarily the ones that automate the most on day one. They are the ones that build confidence over time by preserving control, improving visibility, and helping analysts apply their judgment where it matters most.

If your team is exploring how agents could reduce manual investigative work while preserving control and oversight, download the full guide for a practical roadmap to responsible deployment across compliance and AML, as well as credit underwriting and fraud use cases.

Frequently Asked Questions (FAQs)

How can AI agents help in AML compliance?

AI agents can help automate investigative work, summarize customer and transaction context, triage alerts, support adverse media review, and draft SAR narratives for human review.

Can AI agents reduce AML false positives?

Agents can help reduce false positive burden by adding contextual analysis to alert triage, resolving lower-risk cases where appropriate, and escalating higher-risk cases with supporting evidence.

Should AI agents handle sanctions or PEP screening autonomously?

The guide recommends lower thresholds for human intervention and mandatory senior-level review for sanctions and PEP-related workflows because errors can carry immediate regulatory consequences.

What controls are needed for AML agents?

AML agents need data access controls, escalation rules, human review workflows, audit trails, performance monitoring, and clear policies governing when agents can recommend, resolve, or escalate cases.